- Joined

- Sep 26, 2009

- Posts

- 123,240

- Reaction score

- 192,784

- Points

- 113

Oh pretty boy is going to have a lot of fun over the next 4 years.

PLEASE READ: To register, turn off your VPN (iPhone users- disable iCloud); you can re-enable the VPN after registration. You must maintain an active email address on your account: disposable email addresses cannot be used to register.

Oh pretty boy is going to have a lot of fun over the next 4 years.

The new safety policy

Anthropic’s new safety policy includes a “Frontier Safety Roadmap” that outlines the company’s self-imposed guidelines and safeguards. But the company acknowledged the new framework is more flexible than its past policy.

“Rather than being hard commitments, these are public goals that we will openly grade our progress towards,” the company said in its blog post.

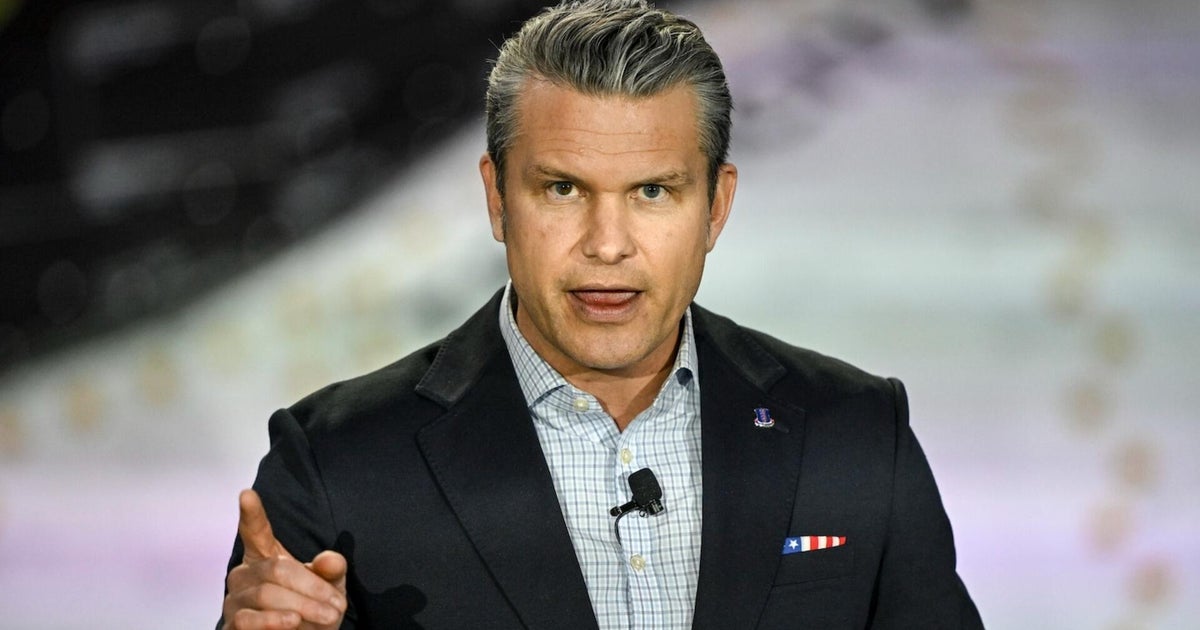

The change comes a day after Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei a Friday deadline to roll back the company’s AI safeguards, or risk losing a $200 million Pentagon contract and being put on what is effectively a government blacklist.

Anthropic has concerns over two issues that it isn’t willing to drop, according to a source familiar with the company’s meeting with Hegseth: AI-controlled weapons and mass domestic surveillance of American citizens. Anthropic believes AI is not reliable enough to operate weapons, and there are no laws or regulations yet that cover how AI could be used in mass surveillance, a source said.

AI researchers applauded Anthropic’s stance on social media on Tuesday and expressed concerns about the idea of AI being used for government surveillance.

The company has long positioned itself as the AI business that prioritizes safety. Anthropic has published research showing how its own AI models could be capable of blackmail under certain conditions. The company recently donated $20 million to Public First Action, a political group pushing for AI safeguards and education.

But the company has faced increasing pressure and competition from both the government and its rivals. Hegseth, for example, plans to invoke the Defense Production Act on Anthropic and designate the company a supply chain risk if it does not comply with the Pentagon’s demands, CNN reported on Tuesday. OpenAI and Anthropic have also been locked in a race to launch new enterprise AI tools in a bid to win the workplace.

Alleged Utah Child Predator and Creator of the “Squatty Potty” Indicted After Allegedly Receiving Child Sexual Abuse Material

An indictment was unsealed today in the District of Utah following the arrest of a Southern Utah entrepreneur, and original co-founder and creator of the “Squatty Potty,” after he was charged for receiving sexually explicit images of a child.

Robert Edwards, 50, of Ivins, Utah, was indicted by a federal grand jury on February 10, 2026. He was arrested on February 12, 2026, in Washington County, Utah. During his initial appearance on the indictment, he pleaded not guilty and was remanded to the U.S. Marshal Service by U.S. Magistrate Judge Paul Kohler in St. George.

According to the allegations in court documents, beginning in March 2021, and continuing through November 2025, in the District of Utah, and elsewhere, Edwards knowingly received multiple images of child sexual abuse material (CSAM). In March 2021, an undercover FBI agent assumed the identity of an online profile account and joined a group chat used to trade child sexual abuse material. The online meeting room was viewing a collection of child sexual abuse material videos, which were being streamed on the main screen. Participants in the meeting were visible, including one user later identified as Edwards.

This has escalated.Everyone has their price.

A few years ago, a group of developers and engineers from OpenAI had an ethical disagreement with Elon Musk and other backers of OpenAI. Their concern was that OpenAI wasn't putting safeguards in place to protect humans from AI's actions. Ultimately, they left OpenAI and started their own company, Anthropic. Anthropic was very vocal about their intent to design AI in an "ethical" framework. Among their "guardrails" were two ethical guidelines that they said that they would never violate:

- AI should never be used for weapons that can be used against the human race and

- AI should never be used to surveil the public.

Pete Hegseth designates Anthropic as supply-chain risk amid feud

President Trump ordered the federal government to cut ties with tech start-up Anthropic. Defense Secretary Pete Hegseth also said he will designate Anthropic a supply-chain risk to national security. Brendan Bordelon, AI and tech influence reporter for Politico, joins "The Daily Report" to discuss.

Senators urge ceasefire in Pentagon’s fight with Anthropic

Key lawmakers in charge of defense policy want both sides to extend talks to avoid a Friday evening deadline that could lead to sanctions for Anthropic.

Top senators in charge of defense policy want Defense Secretary Pete Hegseth and Anthropic CEO Dario Amodei to extend negotiations over the artificial intelligence startup’s red lines on the use of its technology — a request that comes hours before the Pentagon’s Friday evening deadline for Anthropic to lift the restrictions or face severe penalties.

In a letter sent Friday to Hegseth and Amodei and obtained by POLITICO, Senate Armed Services Committee leaders Roger Wicker (R-Miss.) and Jack Reed (D-R.I.) joined top Senate defense appropriators Mitch McConnell (R-Ky.) and Chris Coons (D-Del.) to express “concern over the escalatory direction of negotiations between the Department of Defense and Anthropic.”

President Donald Trump said that he was banning federal agencies from using the services of AI company Anthropic. The declaration comes after months of increasingly heated rhetoric between the Defense Department and Anthropic over the military’s use of the company's systems.

Supreme Court blocks redrawing of Republican-held congressional district in New York over liberal dissent

The US Supreme Court on Monday approved an emergency appeal from a Republican congresswoman in New York who asked the justices to block a state court ruling that ordered her Staten Island-based district to be redrawn ahead of the midterm election.

The high court’s three liberal justices dissented from the decision.

Rep. Nicole Malliotakis and state GOP election officials had urged the Supreme Court to allow New York’s current map to be used, an outcome that will benefit Republicans in the midterm amid a flurry of mid-decade redistricting in other parts of the country.